|

9/3/2023 0 Comments Redshift sqlRedshift Date FunctionsĪmazon Redshift provides many functions to handle the date data types. Each RDBMS may employ different date functions, and there may also be differences in the syntax for each RDBMS even when the function call is the same. Each date value contains the century, year, month, day, hour, minute, and second. Date types are highly formatted and very complicated. We initialize a new NumPy array and pass the cursor containing the query results as a parameter.In the real word scenarios many application manipulate the date and time data types. It is quite straightforward to turn your data into a NumPy array. No matter what kind of analysis you wish to do, you will need to use one of these two libraries to represent your initial data. It provides a high-performance data structure called DataFrame for working with table-like structures. pandas is a widely-used data analysis library in Python.NumPy is a popular Python library used mainly for numerical computing.When it comes to Python, the most popular libraries for data analysis are NumPy and pandas: Now that we have successfully queried our Redshift data and fetched it for our analysis, it is time to work on it using the data analysis tools we have at our disposal. You can also use SQL to do large chunks of your data preprocessing and set up a proper dataset to make your data analysis a lot easier.įor example, you can join multiple tables in the database, or use the Redshift-supported aggregation functions to create new fields as per your requirement. The most significant bit here is the SQL query that we execute to fetch the records from the dataset. That said, please feel free to experiment with any other library of your choice to do so. We will use the psycopg Python driver to connect to our Redshift instance. Hence, you can safely use the tools you’d use to access and query your PostgreSQL data for Redshift. As mentioned above, Redshift is compatible with other database solutions such as PostgreSQL. To access your Redshift data using Python, we will first need to connect to our instance.

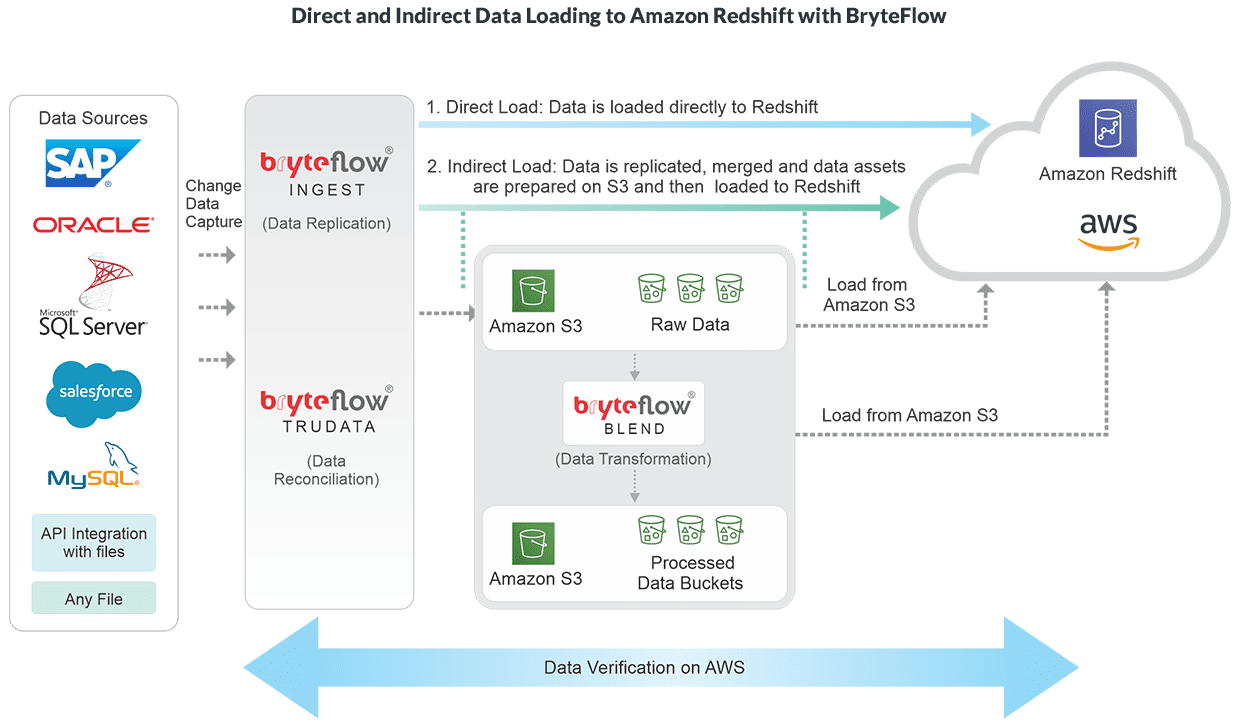

Connecting to Your Redshift Data Using Python They allow you to collect your data across all the customer touch-points, and load them securely into Redshift – or any other warehouse of your choice, with minimal effort. In case you haven’t, the best way to load your data to Redshift is to leverage Customer Data Infrastructure tools such as RudderStack. For this post, we assume that you have the data already loaded in your Redshift instance.

We also have a dedicated blog for manipulating and querying your Google BigQuery data using Python and R, in case you are interested. Some of the insights that you can get include a better understanding of your customers’ product behavior, predicting churn rate, etc. The purpose of this exercise is to leverage the statistical techniques available in Python to get useful insights from your Redshift data. We will then store the query results as a dataframe in pandas using the SQLAlchemy library. Since Redshift is compatible with other databases such as PostgreSQL, we use the Python psycopg library to access and query the data from Redshift. Querying the data and storing the results for analysis.Connecting to the Redshift warehouse instance and loading the data using Python.In this post, we will see how to access and query your Amazon Redshift data using Python.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed